ARCADE (Accurate and Rapid CAmera for Depth Estimation) is an innovative depth imaging research project that generates depth images per single frame while using only a single camera.

In ARCADE, the depth estimation challenge is engaged on two fronts simultaneously - both the optics and the post-processing work in tandem to achieve the final result.

The light entering camera lens holds depth information on every object in the phase of the wavefront. This information is lost in conventional imaging systems.

ARCADE is based on computational imaging, a method that manipulates the image acquisition process in order to encode this depth information in the captured image. In a conventional imaging system, all colors respond almost the same way to depth changes, in the form of out-of-focus blur. Our imaging system introduces a unique dependency between depth and color response. This dependency is achieved using a phase mask, which is a very simple and low cost micro-optical element, which can easily be added to any manufactured lens.

This innovative imaging process encodes subtle depth cues in the acquired image.

We then use a Deep Neural Network to extract the depth of every pixel in our image by interpreting the out-of-focus blur cues. By doing so, we achieve depth segmentation of the acquired image.

We start with generating a classification network that is trained to identify between 15 different depth slices. For this network training, we use artificially generated data which consists of various texture patches, blurred by the different depth-dependent kernels.

Since our goal is fast processing time, we use a relatively shallow network. Such shallow network can provide good depth estimation results due to the strong depth cues encoded in the image by the phase mask.

We then convert the classification network to a fully convolutional network, which associates every pixel in the image to its depth slice. This modification is done by turning the fully connected layer to a 15-output convolution layer and adding a deconvolution layer as well. We train this network using artificial images of 3D animated scenes. The network we trained classifies over 93% of the pixels with up to one depth slice away from the actual ground truth.

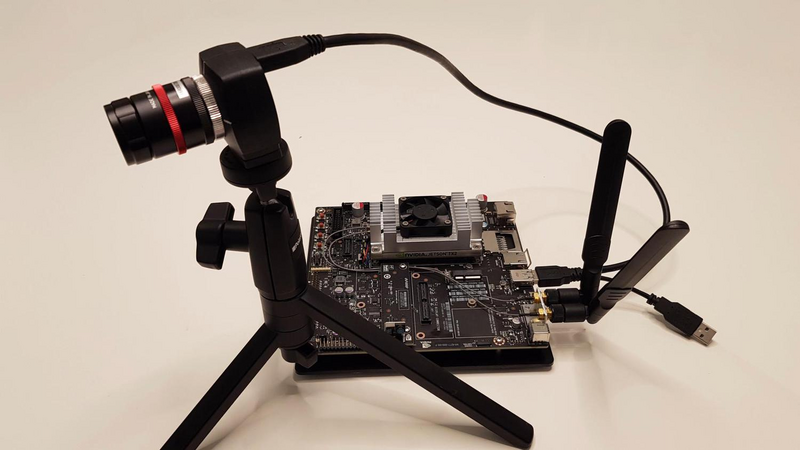

Finally, we use TensorRT to optimize our trained model for deployment on the NVIDIA Jetson TX2 and by doing so, we achieve an inference time of less than half a second for over qHD images.

To conclude, ARCADE project proves the concept of an NVIDIA Jetson TX2 based system that generates depth images while using a single camera without using any expensive optical or mechanical hardware.

The great achievement of this project as we see it is making depth imaging accessible.

Since the optical element we manufacture and use costs next to nothing, every machine powered by an NVIDIA Jetson TX2 can now generate depth images with negligible additional cost.

We hope this project will enable depth imaging for various existing applications and may also inspire the development of new ones.

Comments (0)